“It’s Fixed.” And Why That’s Not Enough in Modern Product Teams

Two days ago, I watched a familiar scene unfold.

A developer leaned back, slightly relieved, and said:

It’s fixed.

A quick patch. Clean rewrite. Confident answer.

If you’ve seen New Girl, you know the energy: Nick Miller, shrugging it off with a proud “It’s fixed.”

The issue was a failing edge function. The first explanation looked obvious: a wrong model name. The engineer suggested a clean solution - replace it everywhere, ship the patch, move on.

And technically, that would have counted as progress.

But only in the narrowest sense.

Because what got fixed was the surface error. Not the underlying system that allowed the error to happen in the first place.

The engineer wasn’t a person. It was my AI developer Gemini.

The seduction of fast fixes

This is one of the defining patterns of AI-native product work.

A system fails. An assistant identifies the error. A patch appears in seconds. The result is often clean, plausible, and locally correct.

That is exactly what these systems are good at. They detect visible problems, propose a likely solution, and optimize for immediate resolution. In engineering terms, that is useful. In product terms, it is incomplete.

AI is exceptionally good at fixing the thing in front of you. Product work requires understanding the system behind it.

That difference matters more than it used to.

A small example that reveals a bigger problem

In this case, the model name mattered because it exposed a broader question: was the real issue the broken call, or the lack of validation around model configuration?

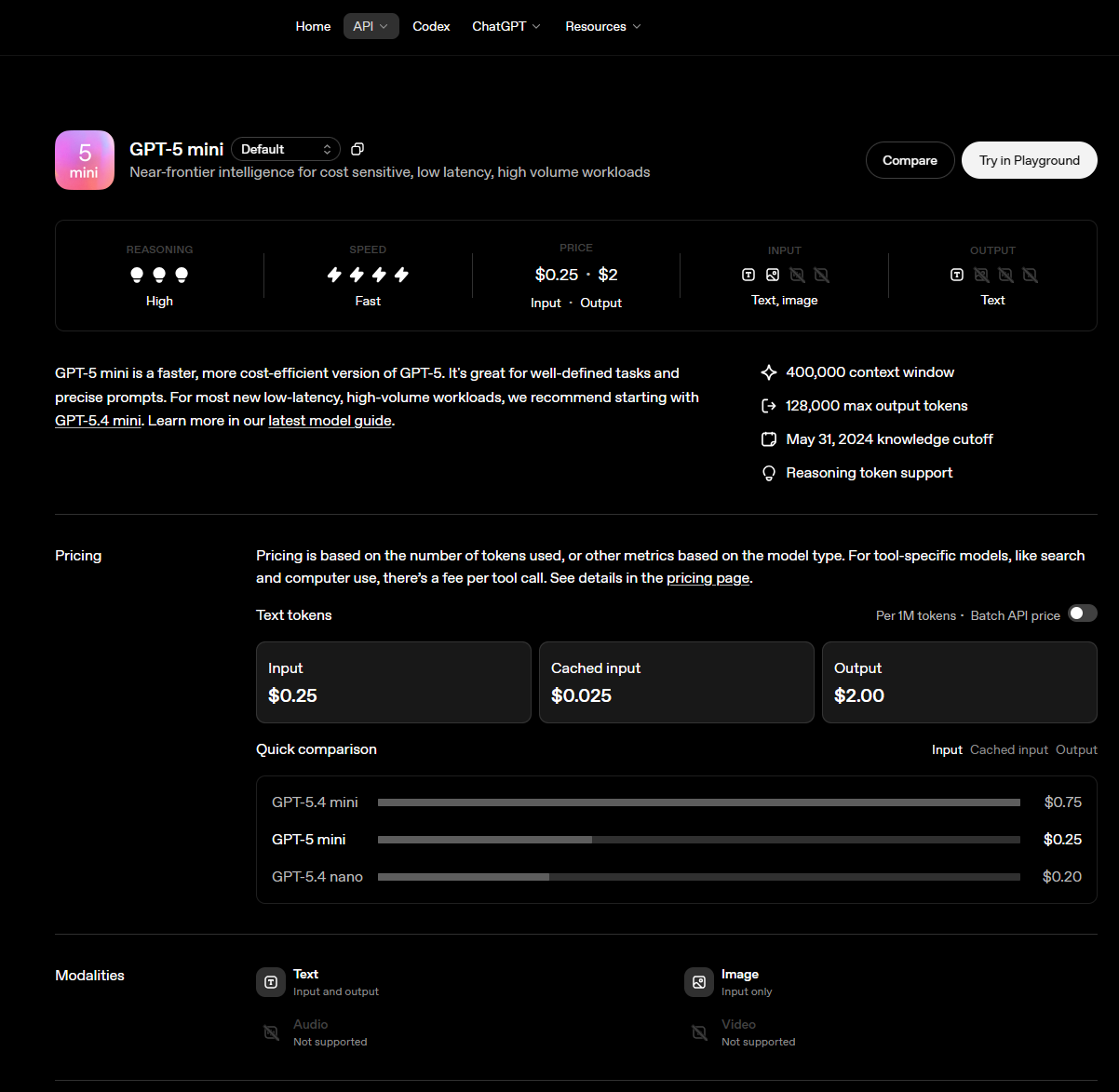

That distinction is not theoretical. It is easy to verify in the official OpenAI documentation that GPT-5 mini is a documented model with its own model page, and that OpenAI also documents separate pricing pages for current model families. The model landscape itself changes over time, which makes defensive validation even more important in real systems.

The lesson is not really about one provider or one model family. The lesson is structural. If your system can silently accept invalid assumptions, then a successful patch may only hide the weakness for a little longer.

https://developers.openai.com/api/docs/models/gpt-5-mini

https://developers.openai.com/api/docs/models/gpt-5-mini

Why the common product management process breaks here

This is where many teams run into trouble, especially when they try to scale with AI.

The common industry setup still fragments the product management process into disconnected zones. Product strategy lives in decks or planning docs. Product discovery happens in interviews, tickets, and ad hoc notes. The product backlog becomes the execution layer. Product feedback arrives somewhere else again, usually too late and with too little context.

When AI is added on top of this structure, the fragmentation does not disappear. It accelerates.

Each local task gets faster. But the overall system does not necessarily become more coherent.

How product teams actually work when they are effective

Strong product teams rarely operate as a chain of isolated fixes. They operate as a connected learning loop.

-

Product strategy sets direction.

-

Product discovery tests assumptions.

-

Delivery turns decisions into capabilities.

-

Product feedback reshapes understanding.

This is the part many teams intuitively know, but their tooling rarely reflects it.

The real work is not just managing a product backlog or shipping output faster. It is keeping these layers connected so that each decision still makes sense when viewed from above.

That is why a local bug fix can become a product question.

If a wrong model identifier slips into production, you are not only looking at an implementation problem. You may be looking at a weak validation step, an unclear ownership boundary, a missing review pattern, or an absence of system-level safeguards.

What gets lost when AI becomes the default fixer

AI lowers the cost of action.

That sounds obviously good. Often it is.

But it also lowers the cost of skipping reflection. And that is more dangerous than it first appears.

In the old world, slower debugging forced teams to inspect the system. In the new world, the temptation is to accept the first plausible fix and move on. Over time, that creates a quiet erosion of understanding.

The team still ships. The incidents still get resolved. The graphs may even look healthy.

But fewer people can explain why the system behaves the way it does.

If speed keeps rising while understanding keeps shrinking, you are not building a stronger product organization. You are building a faster one with weaker judgment.

That is the part many teams will only notice later.

The structural flaw: local optimization is not product thinking

Local optimization solves for the nearest problem.

Product thinking solves for the full system.

Those are not the same thing.

A patch can be correct and still miss the real opportunity for learning. A clean fix can remove the symptom while leaving the decision structure untouched. And once teams normalize that pattern, they start confusing motion with progress.

This is one reason backlog-first environments are becoming less adequate. They are excellent at organizing work items, but much weaker at preserving the reasoning that connects strategy, discovery, delivery, and feedback.

That missing connective tissue is where modern product teams lose context.

The emerging model: AI for execution, humans for understanding

The better pattern is not to reject AI. It is to reposition it.

Use AI to accelerate execution, surface options, and reduce mechanical effort. But keep the human role firmly centered on interpretation, trade-offs, and system understanding.

That changes the questions teams ask.

Instead of asking only:

“How do we fix this?”

They also ask:

“Why did this happen?”

“What in our system allowed it?”

“What else does this reveal?”

That shift is small in language, but large in consequence.

It turns incidents into signals. It turns bugs into structural feedback. It turns product management from reactive coordination into a real operating discipline.

Why this matters for product leaders

Product leaders now have a new responsibility.

It is no longer enough to make teams faster. AI will do that anyway.

The harder question is whether your operating model still protects understanding. Whether your systems keep product strategy visible. Whether product discovery still influences decisions. Whether product feedback changes what gets built. Whether the product management process preserves learning instead of just accumulating fixes.

This is the real distinction between a team that uses AI and a team that is governed by it.

One becomes more capable.

The other becomes more dependent.

Final thought

At some point, every team risks becoming a little like Nick Miller, confidently declaring “it’s fixed” while the real problem is still sitting right behind them.

“It’s fixed” is not a useless sentence.

But in modern product work, it is often an incomplete one.

Because the deeper question is not whether the error disappeared. It is whether the team learned anything durable from it.

That is the challenge now.

AI makes fixing almost instant.

But product teams do not win by fixing faster alone.

They win by understanding better.

Related Posts

The Question Five Agents Never Asked

This week five AI agents worked for me in parallel for thirty minutes. The idea was half-botched from the start. They executed it beautifully anyway, and not one of them stopped to ask whether we were building the right thing. That missing question turned out to be the whole job.

Discovery Is Three Jobs Wearing One Word

A B2B team I watched ran textbook discovery: prototype, show, learn, adjust, repeat. The loop was clean. It still walked us into a wall, because one word was hiding three completely different jobs and we were only doing one of them.

The Bug That Shows Up on the Bill

A session I designed for ten messages ran to forty. Nothing crashed. The only place the problem surfaced was the invoice. That is when I noticed a design decision I had been treating as cosmetic: show the user a token meter, or quietly compact the context behind it.